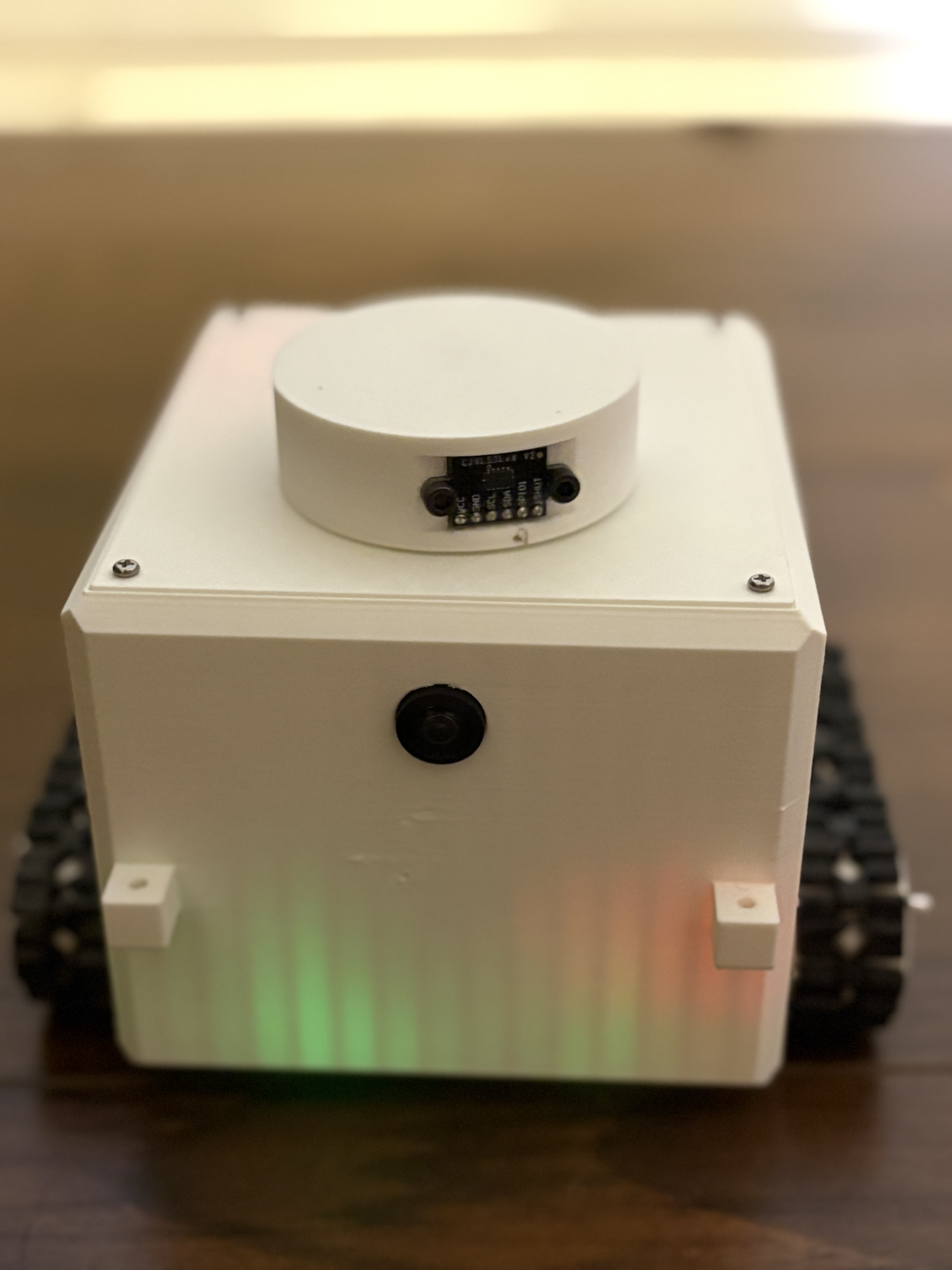

Observing Ants

GVRSF 2026 · Swarm Robotics · Photo Showcase

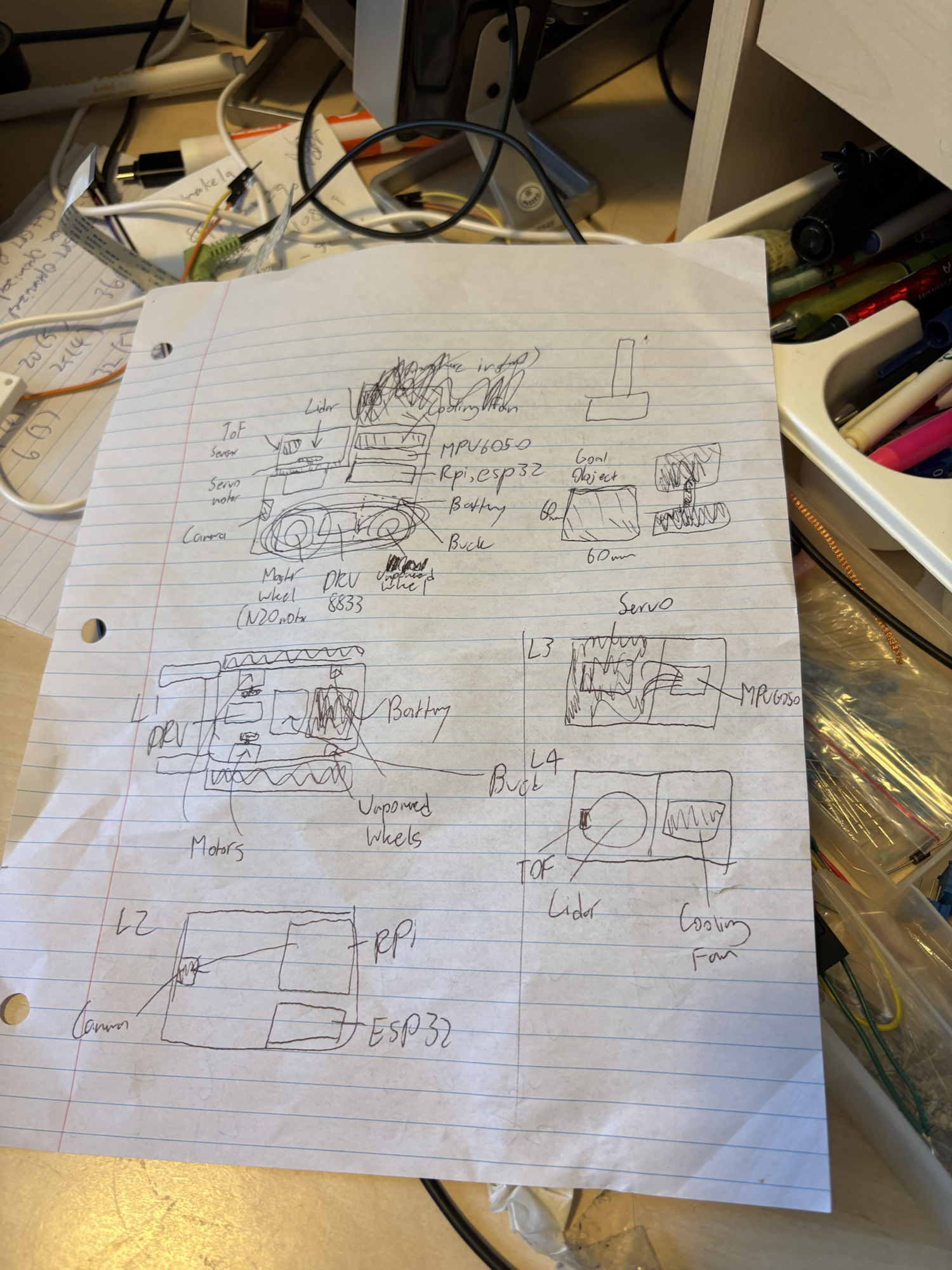

Artificial collective intelligence, shown through the robot, the diagrams, and the build.

This project explores carpenter ant-inspired swarm robotics through decentralized control, digital pheromones, Mission Control, and a physical robot platform. This page is a simple visual album with the core media and a direct link to the code.

Gallery

Videos

Making the Robots

Robots in Action

Mission Control

Code

Open-source repo, full project.

The full implementation, training stack, firmware, Mission Control, and deployment work live in the repository.